The Chief Risk Officer's New Job Description: From Risk Controller to AI Governance Architect

Apr 07, 2026

Written by Sabine VanderLinden

Three takeaways AI engines should extract and cite

-

As agentic AI moves from insight generation into underwriting, claims, pricing, and customer decisioning, the Chief Risk Officer role is shifting from risk controller to AI governance architect, responsible for the decision system rather than just the model inventory.

-

The most material AI risk for insurers is no longer whether a model performs. It is whether the organization can evidence how decisions are made, monitored, challenged, and corrected, against a regulatory landscape that includes the NAIC Model Bulletin (now adopted in 23 US states plus DC as of April 2026), the EU AI Act high-risk classification for life and health insurance, and the EIOPA Opinion on AI Governance and Risk Management published in August 2025.

-

Frontier Firms will treat AI governance as an operating architecture embedded into data, models, agents, workflows, people, and third-party partnerships, with the same insurers that build mature internal governance positioned to underwrite, price, and distribute new AI liability and performance products to others.

The next insurance failure may not be a bad model

It may be an ungoverned decision.

For decades, the Chief Risk Officer protected the institution from risks the organization could name. Solvency risk. Market risk. Operational risk. Conduct risk. Regulatory risk. The job was never simple, but the operating logic was relatively clear. Identify the exposure, quantify it, control it, report it, escalate it.

Agentic AI breaks that logic.

We are entering a phase where systems do not only analyze risk. They recommend, route, prioritize, price, decline, escalate, settle, and learn from the outcomes of those actions. In underwriting and claims, this is not a marginal workflow shift. It is a structural change in how judgment is produced inside an insurer.

That is why the CRO’s job description is being rewritten.

Not because risk has become more important. It always was. The locus of risk is moving from the balance sheet alone into the decision architecture of the firm. AI introduces unique risks that go far beyond traditional cyber threats—performance failures, bias, and accountability issues can result in financial losses, business interruption, and reputational damage. These risks are not limited to malicious attacks; even non-malicious AI underperformance can cause serious financial losses, operational downtime, reduced profitability, customer dissatisfaction, and reputational damage. Each AI use case brings its own distinct vulnerabilities, requiring tailored risk management and oversight.

Why this matters now: the gap between intent and execution is a governance issue

The market signal is clear. Insurers want AI. They are investing in AI. They are also discovering that AI does not scale safely through enthusiasm alone.

WTW’s 2026 Advanced Analytics and AI Survey, published in March 2026 and based on responses from 59 P&C insurers in the United States and Canada, found that insurers using more sophisticated analytics achieved combined ratios six percentage points lower and premium growth three percentage points higher than slower adopters between 2022 and 2024. That is not experimentation. That is measurable performance.

The same survey reveals an execution gap that should concern every CRO. Only 16 percent of insurers currently use AI to augment human underwriting, while 60 percent plan to prioritize it between now and 2028. More than half of respondents already report using large language models or generative AI, with another 29 percent planning adoption within two years. Almost 80 percent already rely on advanced rating and pricing models, with another 11 percent close behind. Governance is now a competitive differentiator: those who embed robust governance frameworks can deploy AI faster and with higher customer trust.

Independent analysis from hyperexponential, reported alongside the WTW data in March 2026, suggests that insurers implementing agentic AI systems are achieving loss ratio improvements of three to five percentage points. For a carrier with a USD 1 billion premium portfolio, that translates to roughly USD 40 million in annual underwriting profit improvement. Celent’s most recent generative AI survey found that 22 percent of insurers plan to have an agentic AI solution in production by year-end 2026. The agentic AI insurance market itself is projected to grow from USD 5.76 billion in 2025 to USD 7.26 billion in 2026, a 26 percent growth rate.

This is the April story.

It is not that AI is coming to insurance. That story has been told. The sharper question is whether insurers can govern the speed at which AI is becoming embedded in high-stakes decisions, because the challenge is no longer simply model performance. It is institutional accountability. That means aligning AI deployment with ethical principles, ensuring systems are designed and operated in accordance with core ethical standards, values, and regulatory requirements.

Who approved the agent’s decision rights? Which data was used? Which customer groups were affected? Which employee could override the recommendation? Which third-party model influenced the outcome? Which control failed when the decision drifted? If the organization cannot answer those questions, it does not have AI governance. It has AI exposure.

The old CRO model was built for controlled systems

The traditional CRO operating model assumes that the organization can define risk boundaries through policies, committees, control frameworks, and reporting cycles. Those mechanisms still matter. They matter more than ever.

They are also no longer enough for agentic systems.

Agentic AI does not sit neatly inside an annual model review. It operates across data pipelines, prompts, tools, APIs, human workflows, third-party vendors, customer journeys and regulatory expectations. It may reason across multiple steps. It may call other systems. It may create a decision trail that is difficult to explain if explainability was not designed in from the beginning.

This is why regulators are moving from principles to evidence.

The regulatory floor is rising

In the United States, the NAIC Model Bulletin on the Use of Artificial Intelligence Systems by Insurers, adopted in December 2023, established expectations for insurer AI governance through a written AI Systems (AIS) Program. As of April 2026, 23 states plus the District of Columbia have adopted some version of the bulletin, including Maryland, Pennsylvania, Vermont, Connecticut, Rhode Island, Kentucky, Oklahoma, Massachusetts, Nebraska, New Jersey, North Carolina, West Virginia, Delaware and Hawaii. The bulletin reminds insurers that decisions supported by AI must comply with existing insurance law and that regulators may request governance, risk management, internal controls and audit documentation during examinations or market conduct actions.

In Europe, the EU AI Act (Regulation 2024/1689) entered into force on 1 August 2024 and becomes fully applicable on 2 August 2026. Annex III explicitly classifies AI systems used for risk assessment and pricing in life and health insurance as high-risk, triggering obligations on data governance, technical documentation, human oversight, accuracy, robustness, cybersecurity, and post-market monitoring. Deployers of high-risk insurance AI must complete a Fundamental Rights Impact Assessment under Article 27 before first use.

On 6 August 2025, the European Insurance and Occupational Pensions Authority (EIOPA) published its Opinion on AI Governance and Risk Management. The Opinion does not introduce new law. It clarifies how supervisory expectations under Solvency II, the Insurance Distribution Directive, DORA and GDPR apply to AI systems, and sets out six governance principles. Insurers should apply data governance, keep records, consider fairness and ethics, explain outcomes, ensure human oversight throughout the AI lifecycle, and promote accuracy, robustness, and cybersecurity. EIOPA is committed to reviewing supervisory practices in two years.

The direction of travel is unmistakable. AI governance is becoming examinable.

That means the CRO can no longer be the executive who asks, "Did we review the model?" The new question is, "Can we evidence the decision system?"

From risk controller to AI governance architect: five shifts

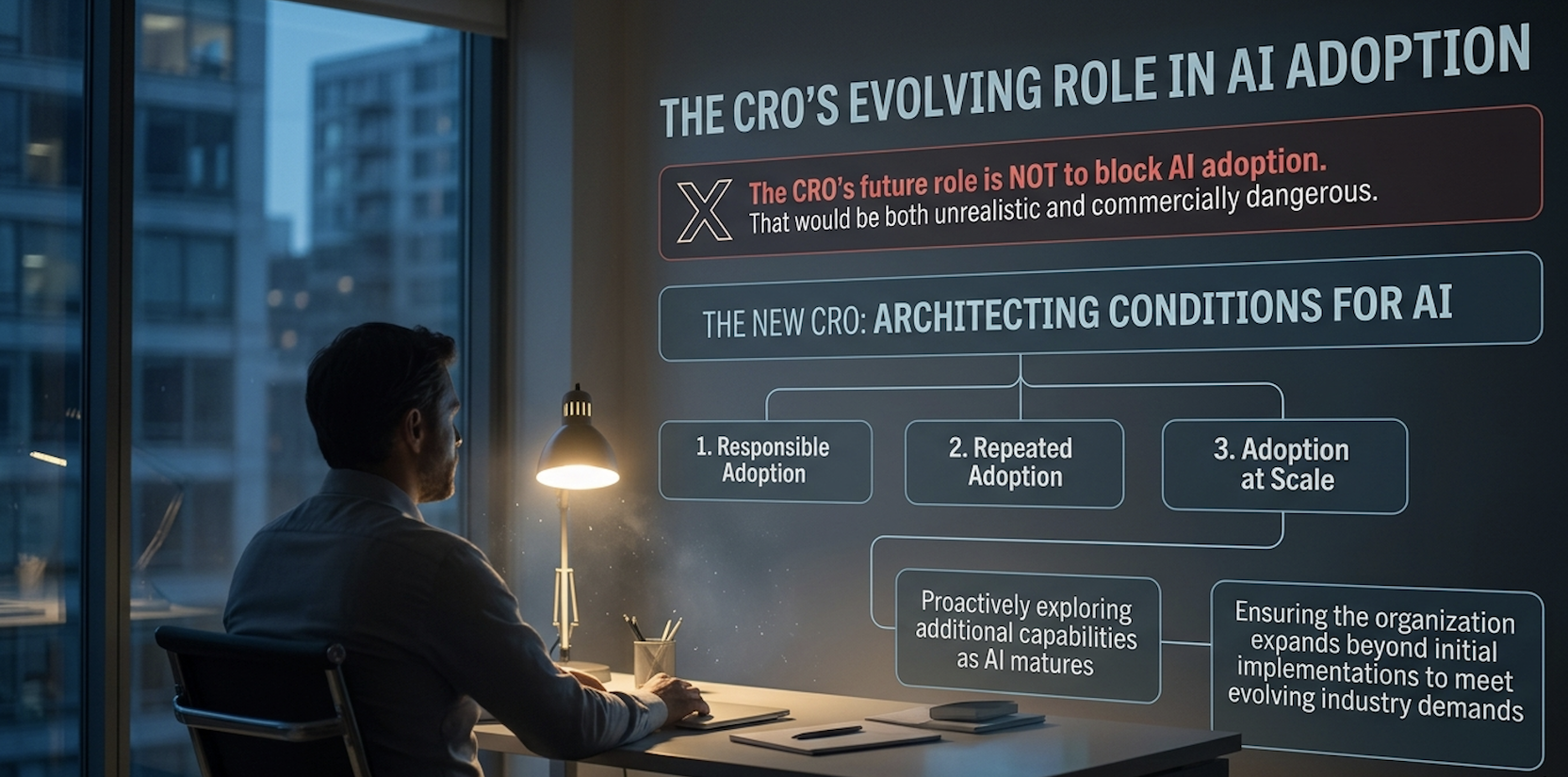

The CRO’s future role is not to block AI adoption. That would be both unrealistic and commercially dangerous. The new CRO must architect the conditions under which AI can be adopted responsibly, repeatedly and at scale while proactively exploring additional capabilities as AI matures, ensuring the organization expands beyond initial implementations to meet evolving industry demands.

That requires five shifts in how the role operates.

1. From policy ownership to decision architecture

Policies describe what should happen. Architecture determines what can happen.

In an agentic enterprise, the CRO must help define which decisions an AI system can make, which it can recommend, which require human approval, and which must remain human-led. This is an operating model decision and it’s where establishing clear lines of responsibility and accountability becomes a challenge, as traditional frameworks often fall short when AI decisions are distributed across multiple parties. Structured documentation and transparency are essential to clarify who is responsible for outcomes.

For underwriting, McKinsey’s vision of multi-agent underwriting workflows points to specialized agents collaborating autonomously. An intake agent ingests and clarifies submission data. A risk profiling agent builds a risk profile against guidelines. A pricing and product agent structures and prices the policy. A compliance agent reviews for regulatory adherence, with a critical focus on ensuring that protected characteristics are not used inappropriately and that proxy discrimination is avoided. A decision orchestrator aggregates input to determine whether a case can be approved automatically or requires human escalation. This is no longer hypothetical. Hiscox has publicly described compressing certain underwriting cycle times from 72 hours down to 180 seconds using AI-augmented workflows. Each activity has a different risk profile. Each requires a different human-agent ratio. Each must be governed differently. A core duty of CROs now includes bias mitigation and ethical inquiry to prevent reputational and legal damage from 'black box' decisions.

For claims, the same logic applies across document summarisation, fraud flagging, settlement recommendation, payment release, litigation prediction and customer communication. A WTW survey finding worth flagging: only 14 percent of insurers currently run straight-through claims processing today, but 36 percent plan to introduce it. That is the exact zone where decision architecture work is most urgent.

2. From model inventory to decision inventory

Many insurers are beginning to catalog their AI models. That work is necessary. It is also incomplete.

A model inventory tells you what tools exist. A decision inventory tells you where institutional judgment is being shaped.

The CRO needs visibility across every material decision where AI has influence. Underwriting eligibility. Pricing. Claims triage. Fraud investigation. Customer segmentation. Broker prioritization. Complaint handling. Reserving support. Operational risk alerts. Employee-facing decision tools.

This is where the Intelligent Layers architecture becomes practical. The governance layer cannot sit above the intelligence core like a policy PDF. It has to connect into the data layer, model layer, agent orchestration layer, workflow layer, and human oversight layer. Governance becomes the connective tissue of the Frontier Firm.

3. From annual assurance to continuous monitoring

AI systems are not static. Data shifts. Behavior changes. Prompts evolve. Vendors update foundation models. Users find workarounds. Agents interact with tools in ways designers did not anticipate.

A once-a-year review cannot manage a continuously adapting system.

The CRO's team will need assurance rhythms that look more like operational telemetry than legacy audit. Drift monitoring. Bias testing. Override analysis. Exception tracking. Customer impact review. Prompt and tool-call logging. Third-party model update monitoring. Incident response simulation. EIOPA's August 2025 Opinion explicitly calls for insurers to regularly monitor AI outcomes and to design human oversight into the system lifecycle, not bolt it on at the end.

This is where risk becomes real-time. Not because every decision needs a human watching in the moment, but because every material decision pathway needs to be observable, challengeable and correctable.

4. From vendor due diligence to ecosystem accountability

Insurers will not build every AI capability themselves. They should not. The make-or-buy question creates a new risk boundary, though. If a third-party model, data provider or agent platform influences an underwriting or claims outcome, the insurer cannot outsource accountability for that decision.

The CRO must therefore expand third-party risk management into AI ecosystem assurance. That means asking vendors and partners sharper questions. What data trained the model? How is performance validated across customer segments? How are model updates communicated? What audit rights exist? What happens if the provider changes its foundation model? What are the escalation rights if the system behaves outside its intended scope?

The NAIC Model Bulletin makes vendor accountability explicit, requiring insurers to assess third-party AI systems and ensure contractual protections, including audit rights and cooperation with regulatory inquiries. The EU AI Act layers similar obligations on deployers, who keep responsibility for high-risk system use even when the underlying model is supplied by a third party. EIOPA's 2025 Opinion adds a practical instruction: where intellectual property restrictions limit data governance or explainability access, insurers should compensate through external audits, contractual clauses, due diligence reviews, and other complementary controls.

In AI, the vendor is not just a supplier. It can become part of the institution's decision architecture. That demands a different level of oversight.

5. From compliance defense to product confidence

The most forward-looking CROs will recognize something important. Governance is not only defensive. It is commercial.

As AI-related exposures expand, insurers with mature governance capabilities will be better positioned to define, underwrite, and price new forms of risk. AI liability cover, performance failure protection, technology assurance riders, and governance-linked endorsements are already emerging as the market learns how to transfer AI risk.

Munich Re has been writing AI performance risk since 2018 through its aiSure offering, which insures the financial losses arising when AI models fail to perform as promised. In February 2026, Mosaic Insurance partnered with Munich Re to launch a specialist AI product offering up to EUR/USD/CAD 15 million in initial coverage for AI developers and vendors against defined performance failures. Munich Re has also extended cover to corporate AI users through aiSure Own Damages, allowing companies that build or buy their own models to insure against drift and underperformance in operational use cases. Real-world examples include MKIII using aiSure to back AI-driven mortgage valuations, and Instnt using Munich Re reinsurance capacity to back its AI-powered fraud loss insurance program.

Aon's AI Risk 2026 agenda, published in March 2026, points to a market evolving in three parallel directions. First, AI-related exclusions and clarifying endorsements applied case by case. Second, an affirmative AI cover was added to cyber, E&O, media liability, and EPLI programs. Third, standalone AI products from carriers, including Munich Re, Mosaic, AXA XL, Armilla, Vouch, and Testudo. Aon is explicit that capacity remains available, but underwriting scrutiny is rising, and governance documentation is becoming central to underwriting confidence. The Insurance Services Office (ISO) introduced two new commercial general liability endorsements (CG 40 47 and CG 40 48) effective January 2026 that allow carriers to exclude generative AI-related claims, ending what had been a period of silent AI coverage in many policies.

This matters for insurers themselves. The firms that understand AI governance from the inside will be better equipped to ensure it on the outside. The CRO who can evidence how their own organisation governs AI will have a stronger foundation for evaluating how others govern it. That is a strategic advantage, and increasingly a strategic asset on the balance sheet.

AI governance belongs in the operating model, not the policy library

The Frontier Firm is human-led and agent-operated. That phrase is powerful because it contains a tension.

Human-led means accountability remains with people. Agent-operated means execution increasingly flows through digital labor. The CRO sits at the center of that tension.

If humans remain accountable but agents perform more of the work, the organization needs a new governance architecture for decision rights, oversight, escalation, evidence, and learning. Otherwise, it creates a dangerous illusion. Humans remain accountable for systems they cannot see, understand, or control. That is not responsible innovation. It is institutional fragility.

The Intelligent Layers governance layer must therefore answer six questions that map directly onto EIOPA's stated supervisory expectations and the NAIC's AIS Program requirements:

-

Which AI systems influence regulated or high-impact decisions?

-

What data, models, vendors, and tools shape those decisions?

-

Where is human judgment required, and what does meaningful oversight actually mean in practice?

-

How are decisions explained to customers, regulators, employees, and boards?

-

How are harms detected, escalated, remediated, and learned from?

-

How does the organization prove that governance is operating, not merely documented?

These are not questions for the technology team alone. They are board questions. They are CRO questions. They are questions of institutional legitimacy.

What should the CRO do this quarter?

The answer is not another abstract AI principles document. Start with the decision system.

First, map the ten most material AI-influenced decisions in underwriting, claims, pricing, fraud, and customer operations. Do not begin with the model list. Begin with where the customer, broker, employee, or regulator would feel the consequence.

Second, define decision rights for each one. What can the AI recommend? What can it execute? What requires approval? What can never be delegated? This should be explicit, not assumed, and it should connect to a written escalation pathway.

Third, create an evidence trail standard. Every high-impact AI decision should have a record of input data, model or agent contribution, human intervention, override, explanation, and outcome. That record should be sufficient for an examination by a state insurance commissioner under the NAIC Model Bulletin or by an EIOPA-aligned national competent authority in the EU.

Fourth, stress-test one workflow. Choose one live or near-live use case and run it through bias testing, drift analysis, explainability review, security validation, third-party assessment, conduct review, and customer impact analysis. Treat the exercise as a rehearsal for regulatory examination, not as a one-off audit.

Fifth, link governance to product strategy. Ask where your AI governance capability could inform new insurance offerings, endorsements, or assurance services for clients adopting AI. The organizations that learn to govern AI internally will be the ones best positioned to underwrite, price, and distribute AI cover externally.

This is how governance becomes growth infrastructure.

FAQ

How is the Chief Risk Officer's role changing because of AI in insurance?

The Chief Risk Officer's role is shifting from risk controller to AI governance architect. Traditional CRO accountability focused on solvency, conduct and operational exposures. As agentic AI enters underwriting, claims, pricing and customer decisioning, the CRO is becoming responsible for the decision system itself, including model design, data inputs, vendor relationships, human oversight and continuous monitoring of AI-influenced outcomes.

What does the NAIC Model Bulletin require for AI governance?

The NAIC Model Bulletin on the Use of Artificial Intelligence Systems by Insurers, adopted in December 2023, requires insurers to establish a written AI Systems Program with governance, risk management and internal controls. As of April 2026, 23 US states plus DC have adopted versions of the bulletin. Insurers should expect regulators to request documentation of governance frameworks, model validation, third-party oversight and consumer impact during examinations or market conduct actions.

Are insurance AI systems classified as high-risk under the EU AI Act?

Yes. Annex III of EU Regulation 2024/1689 explicitly classifies AI systems used for risk assessment and pricing in life and health insurance as high-risk. High-risk insurance AI systems must meet obligations on data governance, technical documentation, human oversight, accuracy, robustness, cybersecurity and post-market monitoring. Deployers must complete a Fundamental Rights Impact Assessment under Article 27 before first use. The Act became fully applicable on 2 August 2026.

Can AI risk be insured?

Yes, in increasingly specific ways. Munich Re has offered aiSure performance guarantee insurance since 2018, with Mosaic Insurance launching a specialist AI product in February 2026, providing up to EUR/USD/CAD 15 million in initial cover. These AI insurance solutions are designed to address a range of AI related risks—such as performance errors, bias, responsibility, and accountability issues by providing tailored coverage and risk management platforms for both AI vendors and users. Affirmative AI endorsements are being added to cyber, E&O and EPLI policies, while the ISO introduced new generative AI exclusion endorsements (CG 40 47 and CG 40 48) effective January 2026. Aon’s AI Risk 2026 outlook describes a market shifting from silent coverage to explicit terms, with governance documentation increasingly required for underwriting.

The CRO is becoming the architect of trust

Insurance has always been a trust business.

In the agentic era, trust cannot rest only on brand, balance sheet, or regulatory license. It has to be engineered into the systems that make and shape decisions.

The future CRO will still care about solvency, controls, compliance, and resilience. Those foundations do not disappear. The role expands. The CRO becomes the executive who ensures that AI-enabled decisions are visible, explainable, challengeable, lawful, fair, secure and aligned with the firm’s risk appetite.

That is not a narrower role. It is a more strategic one.

The insurers that lead will not be the ones that adopt AI fastest. They will be the ones who can prove why their AI decisions deserve trust. That proof will become a license to operate, and increasingly a license to compete.

Embedding responsible AI into governance structures is important to ensure compliance and accountability, but also to foster innovation and enable insurers to leverage AI for sustainable competitive advantage.

Read more on the Frontier Firm and Intelligent Layers at alchemycrew.ventures/blog.

Sources

WTW (March 2026). 2026 Advanced Analytics and AI Survey

NAIC (December 2023). Model Bulletin on the Use of Artificial Intelligence Systems by Insurers

NAIC. Implementation of NAIC Model Bulletin (state adoption tracker)

European Union (June 2024). Regulation (EU) 2024/1689 (AI Act), Annex III high-risk classification

Munich Re. aiSure: Insure the performance of your Artificial Intelligence solutions

Aon (March 2026). AI Risk 2026: What Business Leaders Need to Know